optimizing-rust.md

This article is a breakdown of how I built hdk-rs, my toolchain for a defunct game's revival development, and how through extensive testing, profiling, and optimization, I managed to turn it from a simple commodity to the most portable and performant tool available in the scene.

Before we get into the heavy Rust optimizations though, we need to talk about the target hardware.

⚠️ Disclaimer: everything I'm going to say in this article regarding time is strictly measured on my own machine, which is a 14th Gen Intel Core i5-14600KF. Your mileage may vary, but the relative performance improvements should be consistent across different hardware.

The PlayStation 3 console

PlayStation Home is an old game (it died in April 2015), and it was built for a console with extremely tight memory constraints.

If you're familiar with the PS3, you might know it technically has 512MB of RAM; with that already being a horribly tight amount, it gets even worse by being split halfway between RAM and VRAM. This means only 256MB is available for system memory, while the other 256MB is strictly reserved for the RSX GPU.

Because you can't just load massive archives into memory, the developers had to get creative with their archive formats. ZIP or TAR were not options (and regardless: using a custom archive format adds to their "security through obscurity" approach). They created their own custom archive format, which is basically a glorified file table with a big binary blob at the end of it.

The SHARC archive format

To survive on the PS3, archives were heavily optimized for "cherry-picking" files. The archive format we're looking at today is called "SHARC".

The structure is brutally efficient:

- A main header at the very start.

- An encrypted Table of Contents (ToC) immediately following it.

- The actual raw file data referenced by the ToC's offsets, optionally compressed and encrypted.

Furthermore, the game's archive formats heavily utilized symmetrical CTR encryption. Why? Because CTR allows for random access and heavy parallelism across the PS3's PPU and SPUs without needing to decrypt the entire file sequentially.

The ToC is also encrypted with a simple AES cipher, and it contains metadata about each file in the archive, such as its name, offset, size, and whether it's compressed or not.

The Segmented Zlib (aka EdgeZlib) algorithm

Sony used Zlib a bit creatively. They took the standard DEFLATE algorithm and modified it to support "segmented" compression. This means that instead of compressing the entire file as one block, they split it into smaller segments (up to 64KB each) and compressed each segment independently. Then, they stored the compressed and uncompressed sizes of each segment in the 4 bytes before the actual stream.

Sony's code always used the highest compression level, which is level 9. This effectively forces us to always use the slowest compression algorithm, which is a nightmare for performance that we're going to have to solve.

Our sample archive: COREDATA.SHARC

You can't optimize without a baseline, so I picked the perfect candidate to torture-test my toolchain: COREDATA.SHARC.

This archive is the lifeblood of the game. It holds every file necessary for the UI, basic data, fallbacks for missing CDN content, localization files, avatar 3D models, UI textures, and sounds.

It is also incredibly chunky:

- 1442 individual entries

- All kinds of files: 3D models, textures, audio files, XML configs, etc.

- All compressed at maximum compression settings

- All encrypted with a custom XTEA cipher implementation

It's perfect.

The previous state of the art

Before I started this project, the community relied on legacy C# tools to repack these files. They worked (very well, in fact), but they were painfully slow.

Repacking the full COREDATA.SHARC archive with the existing C# alternatives usually takes around 20 to 30 seconds. When you're actively developing things, testing one-line changes and restarting the entire game on the RPCS3 emulator every time it crashes... this quickly gets annoying.

I knew we could do better. Much better.

It's analysis time!

Before I started throwing threads at the problem, I needed to see exactly what my baseline was. I used my initial naive, completely serial version of the repacker in Rust: no fancy optimizations, no multithreading, just a correct implementation of the SHARC format in Rust, along with the compression and encryption algorithms.

I ran it through samply, an amazing Rust profiling tool for all kinds of binaries (even non-Rust ones), to get a proper flamegraph of where the CPU was spending its time.

Right out of the gate, repacking the COREDATA.SHARC archive took around 7 seconds. Compared to the 20–30 seconds the old C# tools took, a 7-second serial run is already impressive. But I wasn't going to call it a day there.

Looking at the samply output, I broke down the time spent into three main categories:

- ~50% of the work was spent purely on compression (running on a single core).

- ~40% was spent on I/O (reading and writing to disk).

- ~10% was spent on cryptography and other miscellaneous tasks.

The obvious start: multithreading the compression

If there's one thing I hate, it's the lazy "just parallelize the whole loop" approach. If you just shove everything into a parallel iter, you end up oversaturating the CPU with scheduling work for trivial operations, causing lock contention and actually slowing down the easy parts.

Instead, I brought in rayon but implemented it strictly on the expensive routines: the compression. I kept the I/O orchestrated and serial-friendly so the disk wasn't thrashing, while the CPU cores gnawed through the DEFLATE streams.

The time immediately cut down from 7 seconds to 2 seconds. Nice!

We're off to a good start, but we can do even better.

Let's get down to lower levels

Two seconds is very nice, but when you're writing systems-level Rust, you know you can squeeze more out of the memory control it gives you. Allocating memory on the heap is expensive, and doing it thousands of times a second is a great way to introduce latency.

I did some research and implemented two massive quality-of-life memory optimizations:

- smallvec: I swapped out standard vectors for SmallVec in a couple of places handling small files (like tiny config texts). If the buffer is under a certain threshold, it just stays on the stack. Zero heap allocation. And in the case our buffer exceeds the threshold, it seamlessly spills over to the heap.

- thread_local: I set up a thread-local storage scratchpad for the compression writers. Instead of allocating a new buffer for every single file being compressed, each thread gets its own reusable workspace. This maximises CPU cache locality.

These two changes alone shaved almost a full second off the execution time, bringing our best current time down to 1.1 seconds.

We're almost there.

The final frontier: SIMD-accelerated compression with Intel ISA-L

I ran samply one more time. The landscape of the program had completely changed. Now, 90% of the execution time was spent just on compression, with everything else squashed down to 10%.

The architecture was perfect; the only thing holding me back was the math itself. I needed a faster DEFLATE implementation.

Enter Intel's ISA-L (Intelligent Storage Acceleration Library). It's a suite of heavily optimized, SIMD-accelerated functions designed specifically for high-throughput storage applications.

Implementing it wasn't even difficult at all. The Rust ecosystem is fantastic, and the isal-rs crate handles all the low-level C bindings and build scripts for me, exposing a very clean and convenient API to work with.

Final results

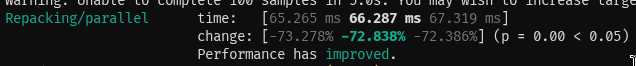

Once ISA-L was hooked up to my thread_local scratchpads and fed by rayon, I ran the benchmark one last time.

The time dropped to a whopping 66ms. Sixty-six milliseconds to repack the entire COREDATA.SHARC archive, with perfect byte-for-byte accuracy, and fully respecting the SHARC format's quirks.

That is a ~30,200% speed boost (over 300x faster) over the previous time of 20 seconds. Absolute madness.

I think we're at a good place to call it a day. The toolchain is now so fast that if you blink you might miss the repack finishing.

But... why worry so much about performance?

It's a valid question. Why go through all this trouble to optimize a toolchain for a game when existing tools worked fine, albeit slowly?

On modern machines, this is perfectly fine for the average user. You just click a button and wait 20 seconds. No huge deal.

However, hdk-rs wants to be my end-all-be-all tool for the community, and a major interest of mine was always portability. I wanted it to run on anything, from a high-end gaming PC down to an iPhone, and even on Android TVs (yes, I actually ran it on a Samsung Series 7 Smart TV).

And that's not even entering single-threaded performance. If you run it through WASM (hdk-rs has WASM bindings), where you don't have multithreading, all of the low-level optimizations still apply and help.

And even then, it's just a fun challenge. I love squeezing every last drop of performance out of my code, and this project was a perfect playground for that.

Thank you for reading! If you want to check out the code, it's all open source on GitHub, licensed AGPLv3. A CLI is also available here for all major platforms (though ISA-L is only automatically-enabled on the Linux CI build - sorry!).